- For educators

- English (US)

- English (India)

- English (UK)

- Greek Alphabet

This problem has been solved!

You'll get a detailed solution from a subject matter expert that helps you learn core concepts.

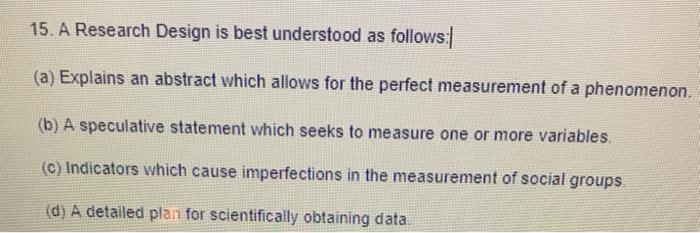

Question: 15. A Research Design is best understood as follows: (a) Explains an abstract which allows for the perfect measurement of a phenomenon. (b) A speculative statement which seeks to measure one or more variables. (C) Indicators which cause imperfections in the measurement of social groups (d) A detailed plan for scientifically obtaining data

This AI-generated tip is based on Chegg's full solution. Sign up to see more!

Identify the main purpose of a research design in the context of a sociology 101 course by comparing the given options for their fit as a definition.

A detailed plan for scientifically obtaining the data …

Not the question you’re looking for?

Post any question and get expert help quickly.

IMAGES

VIDEO